CONCEPT CHECK : AI CHIPS

CONCEPT CHECK : AI CHIPS

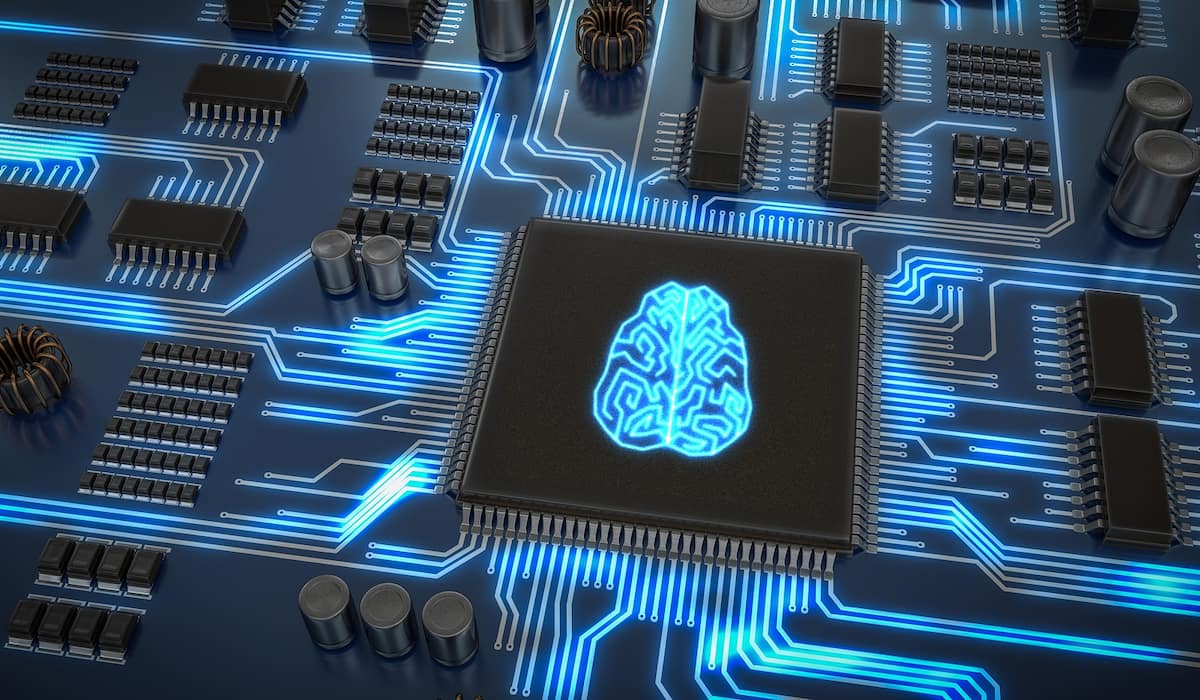

WHAT ARE AI CHIPS?

• AI chips are built with specific architecture and have integrated AI acceleration to support deep learning-based applications

• Deep learning, more commonly known as active neural network (ANN) or deep neural network (DNN), is a subset of machine learning and comes under the broader umbrella of AI. It combines a series of computer commands or algorithms that stimulate activity and brain structure

• DNNs go through a training phase, learning new capabilities from existing data. DNNs can then inference, by applying these capabilities learned during deep learning training to make predictions against previously unseen data

• Deep learning can make the process of collecting, analysing, and interpreting enormous amounts of data faster and easier

• These chips, with their hardware architectures and complementary packaging, memory, storage and interconnect technologies, make it possible to infuse AI into a broad spectrum of applications to help turn data into information and then into knowledge

• There are different types of AI chips such as application-specific integrated circuits (ASICs), field-programmable gate arrays (FPGAs), central processing units (CPUs) and GPUs, designed for diverse AI applications.

ARE THEY DIFFERENT FROM TRADITIONAL CHIPS?

• When traditional chips, containing processor cores and memory, perform computational tasks, they continuously move commands and data between the two hardware components. These chips, however, are not ideal for AI applications as they would not be able to handle higher computational necessities of AI workloads which have huge volumes of data. Although, some of the higher-end traditional chips may be able to process certain AI applications.

• In comparison, AI chips generally contain processor cores as well as several AI-optimised cores (depending on the scale of the chip) that are designed to work in harmony when performing computational tasks. The AI cores are optimised for the demands of heterogeneous enterprise-class AI workloads with low-latency inferencing, due to close integration with the other processor cores, which are designed to handle non-AI applications.

• AI chips, essentially, reimagine traditional chips’ architecture, enabling smart devices to perform sophisticated deep learning tasks such as object detection and segmentation in real-time, with minimal power consumption.

WHAT ARE THEIR APPLICATIONS?

• Semiconductor firms have developed various specialised AI chips for a multitude of smart machines and devices, including ones that are said to deliver the performance of a data centre-class computer to edge devices

• Some of these chips support in-vehicle computers to run state-of-the-art AI applications more efficiently

• Powering applications of computational imaging in wearable electronics, drones, and robots.

• Use for NLP applications has increased due to the rise in demand for chatbots and online channels such as Messenger, Slack, and others. They use NLP to analyse user messages and conversational logic. Then there are chipmakers who have built AI processors with on-chip hardware acceleration, designed to help customers achieve business insights at scale across banking, finance, trading, insurance applications and customer interactions.